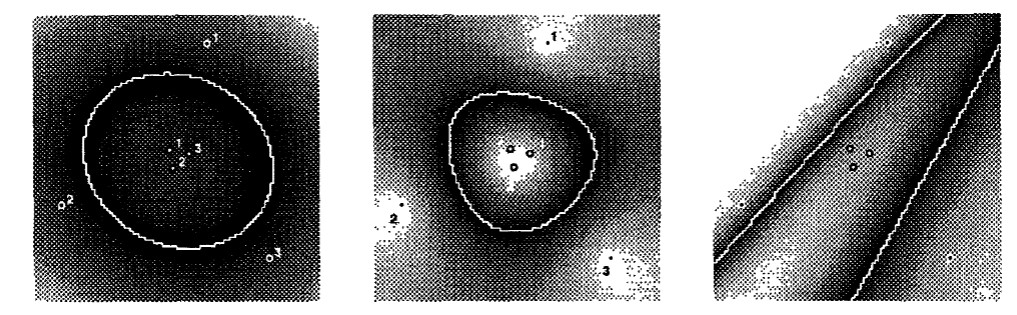

Original results from 1992 paper

Original results from 1992 paper

“Nothing is more practical than a good theory.” — Vladimir Vapnik

Vladimir Vapnik arrived at Bell Labs from Moscow in the early 1990s already in his 50s. He brought three decades of statistical learning theory the Western world had never seen. From 1961 to 1990, he had worked on one question. Under what conditions can you guarantee a learning algorithm generalises from training data? Mathematics that the Cold War had kept invisible.

His timing was perfect. Machine learning systems were hitting production and failing spectacularly. Models that worked in the lab struggled in real-world applications. Models need more than just training accuracy to be useful. Companies needed to know whether their systems would hold up on unseen data. Vapnik had spent thirty years proving exactly when you could make that promise.

In 1995, Vapnik and Corinna Cortes published the paper that changed machine learning. The insight was to maximise the margin between classes when drawing decision boundaries. Wider margins meant better generalisation. The mathematics let you calculate performance bounds before deploying the model.

Guarantees matter. Deny someone a loan and you had to explain why. Gene sequencing data cost thousands of dollars per sample. The mathematics of SVMs guaranteed how well the system would generalise from the training data to new cases.

The kernel trick made it work. Vapnik initially resisted because the kernel trick came from his rivals in Moscow, but his colleagues tried it anyway, and it worked. Everyone thought you needed supercomputers to separate data in high dimensions. Text documents generated thousands of features. Gene expression arrays had tens of thousands of measurements. The kernel trick proved them wrong. Compute in low dimensions. Make decisions as if you were working in high dimensions. This was the breakthrough that made SVMs practical.

SVMs took over and by the early 2000s, they were being used to tackle countless real world problems. Banks used them for credit scoring. Pharmaceutical companies designed drug discovery pipelines around them. Email providers deployed them to filter spam. Performance guarantees you could calculate in advance were a remarkable innovation. SVMs provided decisions you could explain to regulators, were computationally efficient, and worked when data was scarce.

Vapnik created a profession. Before SVMs, you built systems and hoped they worked. After SVMs, you could calculate bounds on performance before building anything. Data science had arrived. You could hire mathematicians and statisticians and teach machine learning as a discipline.

Every data scientist now learns what Vapnik spent his career developing. SVMs still power fraud detection, spam filters, medical diagnostics. They stopped being AI the moment they became essential.

Further Reading

-

Boser, B.E., Guyon, I., & Vapnik, V.N. (1992). “A Training Algorithm for Optimal Margin Classifiers.” COLT ‘92.

-

Cortes, C. & Vapnik, V. (1995). “Support-Vector Networks.” Machine Learning, 20(3), 273-297.

-

Law, H. (2025). “Bell Labs’ last trick: Support Vector Machines.” Learning From Examples.