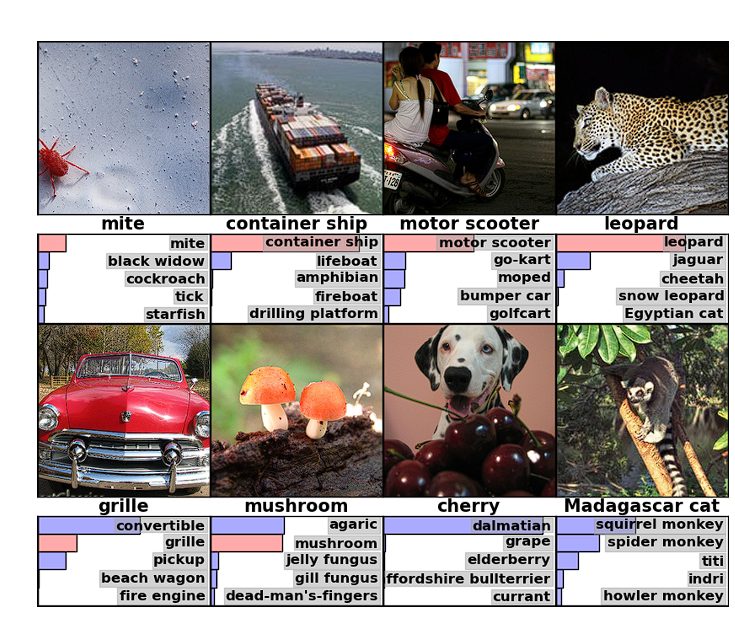

ImageNet test images

ImageNet test images

“That moment was pretty symbolic to the world of AI because three fundamental elements of modern AI converged for the first time.” — Fei-Fei Li, 2024

In 2012, a graduate student trained a neural network in his bedroom on two gaming GPUs. It beat every major AI lab in the world.

The competition was the ImageNet Large Scale Visual Recognition Challenge. AlexNet, the network he built, won by an unprecedented 10.8 percentage points. No other result in the competition’s history came close. Every other team used hand-engineered features fed into traditional classifiers. AlexNet learned its own features from the data.

Alex Krizhevsky built it. He was a graduate student at the University of Toronto, working under Geoffrey Hinton. Ilya Sutskever, another of Hinton’s students, recognized that Krizhevsky’s GPU code could tackle ImageNet. Between the three of them, they beat every major lab in the world.

The breakthrough happened because three conditions aligned for the first time.

-

Data. By 2012, Fei-Fei Li’s ImageNet project had assembled over a million labelled images across a thousand categories. Data at this scale was needed to make training deep networks possible. Li had spent years building this dataset, hiring workers through Amazon Mechanical Turk to label millions of images by hand. Most of the field thought it was wasted effort.

-

Compute. GPUs were designed to render graphics fast enough for gaming. In 2007, NVIDIA made them programmable for general computation. Krizhevsky saw the opportunity and wrote custom code that mapped neural network training onto GPU architecture. Each card had only 3GB of memory, so he split the network across two GPUs and designed communication between them at specific layers. He made it work on consumer hardware that cost $500.

-

Algorithm. Backpropagation had existed since 1986, so why hadn’t anyone built this before? During training, error signals pass backward through the network layer by layer. With the activation functions used at the time, those signals shrank at every layer. By the time they reached the early layers, they had vanished. Deep networks were impractical. Krizhevsky fixed it by passing positive inputs through and zeroing out negative ones. The gradient no longer shrank at each layer and training ran about six times faster. He also used dropout, randomly switching off half the neurons during each training pass, which forced the network to learn robust patterns rather than rely on any single pathway.

Winning by 10.8 percentage points was impossible to ignore. Researchers who had spent careers designing handcrafted image descriptors were confronted with a network that learned better representations on its own. Yann LeCun, who had been working on convolutional networks since the 1980s, called AlexNet “an unequivocal turning point in the history of computer vision.” Within two years, every competitor at ImageNet used deep learning.

Hinton, LeCun, and Yoshua Bengio won the Turing Award in 2018. Hinton won the Nobel Prize in Physics in 2024 and later joked about the division of labour: “Ilya thought we should do it, Alex made it work, and I got the Nobel Prize.”

Deep learning started in a bedroom in Toronto, with two graphics cards and a graduate student who made it work. The field shifted fast, within two years most major labs had reorganised around it. When people said AI, they now meant deep learning.

Further Reading

-

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). “ImageNet Classification with Deep Convolutional Neural Networks.”

-

Deng, J., Dong, W., Socher, R., Li, L., Li, K., & Fei-Fei, L. (2009). “ImageNet: A Large-Scale Hierarchical Image Database.”

-

Quartz (2022). “The Inside Story of How AI Got Good Enough to Dominate Silicon Valley.”

-

Pinecone (2023). “AlexNet and ImageNet: The Birth of Deep Learning.”