Home | A History of AI

Belief Propagation

Belief Propagation

“Probability theory is nothing but common sense reduced to calculation.” — Pierre-Simon Laplace, 1814

“When you have uncertainty, and you always have uncertainty,” Judea Pearl said, “rules aren’t enough.” Pearl had arrived at AI by accident. Semiconductors wiped out his job at a memory research lab in 1969, he called a friend at UCLA, and took whatever position was available.

He spent years reading the field’s approaches to uncertain reasoning — fuzzy logic, belief functions, certainty factors — and became convinced it was avoiding something it already had the mathematics for. Bayes’ theorem had been available since 1763. It told you exactly how to update a belief when evidence arrived. Why was nobody using it?

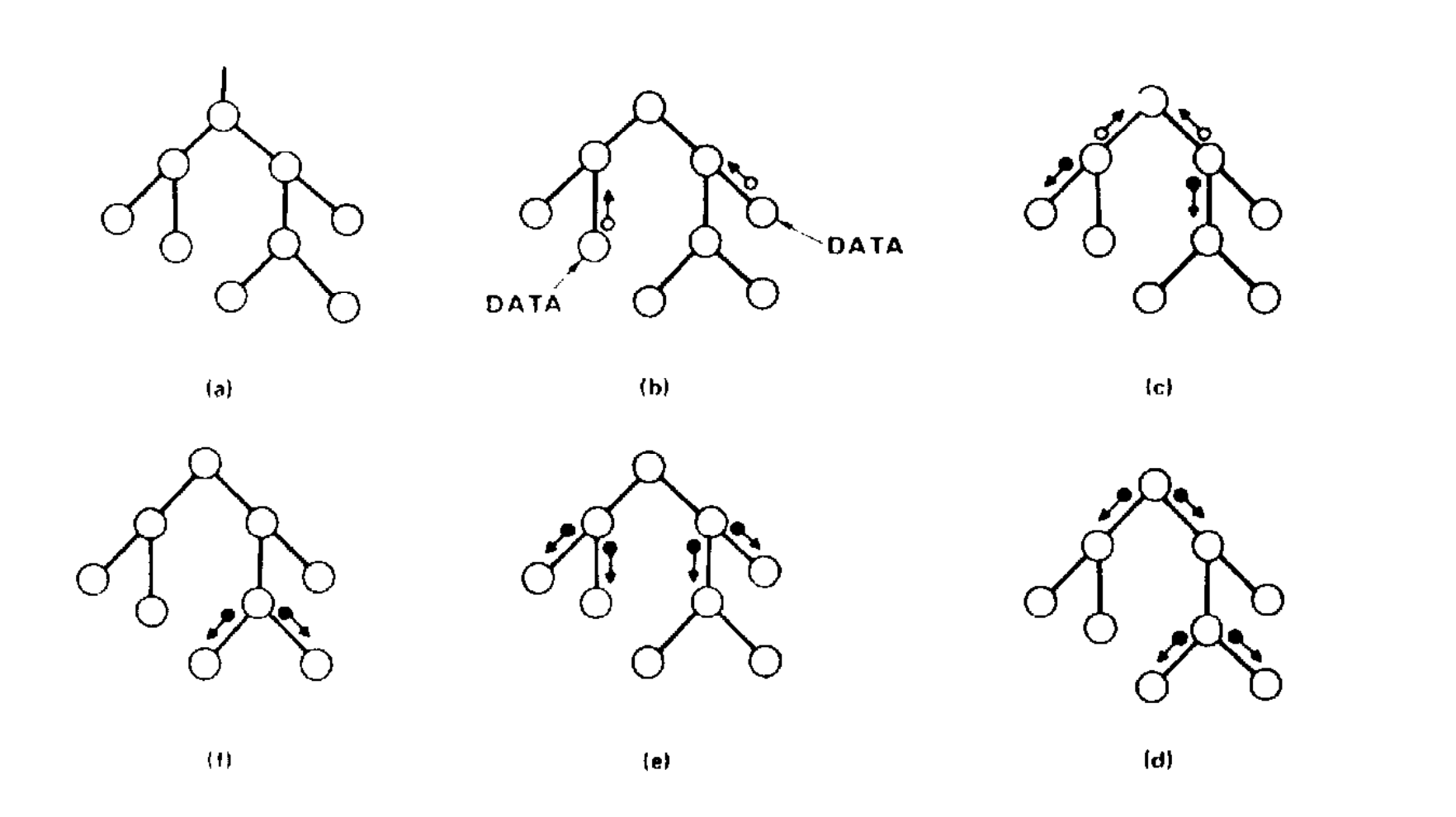

The answer he arrived at was Bayesian networks: a graph where each node is a variable and each edge represents how one variable influences another. A disease node connects to symptom nodes, each connection carrying a probability table derived from data. When evidence arrives, it propagates through the graph and the mathematics tells you the most probable explanation.

The idea came from cognitive science. He had been reading a 1976 paper by David Rumelhart on how children read, which showed how neurons at multiple levels pass messages back and forth to resolve ambiguity, for example whether a smudged word is “FAR” or “CAR” or “FAT.” Pearl realised these messages had to be conditional probabilities. Bayesian reasoning was essential for passing them up and down the network and combining them correctly.

David Heckerman at Stanford built PATHFINDER using Pearl’s framework, a system for diagnosing lymph-node diseases across 60 conditions and 130 symptoms. Get the diagnosis wrong and a patient could miss life-saving treatment. Experienced specialists frequently disagreed with each other on exactly these calls. PATHFINDER matched the accuracy of the leading expert whose knowledge it had been built from.

But Bayesian networks only answered one kind of question: given what I observe, what is most probable? Pearl called this the first rung of a ladder, and spent the next decade climbing it.

-

Seeing. What is the probability of X given I observe Y? This is what Bayesian networks do, and what all statistics does. You watch a thousand smokers and note how many get cancer.

-

Doing. What would happen if I intervened and set X to a particular value? Observational data cannot answer this, however much of it you have. Watching smokers tells you nothing about whether making someone stop smoking will reduce their cancer risk, which is why Pearl formalised do-calculus to handle it.

-

Imagining. What would have happened if things had been different? The patient died. Would they have survived with a different drug? No dataset contains the answer to a counterfactual. Pearl developed the mathematics to reason about it anyway.

Pearl argued they were fundamentally different kinds of question, and that conflating them had caused enormous confusion in science, medicine, and economics for over a century.

Pearl received the Turing Award in 2011 for the first revolution. By then he was deep into the second, and later wrote that “fighting for the acceptance of Bayesian networks in AI was a picnic compared with the fight I had to wage for causal diagrams.”

Further Reading

-

Pearl, J. (1982). “Reverend Bayes on Inference Engines: A Distributed Hierarchical Approach.” AAAI-82 Proceedings.

-

Heckerman, D., Horvitz, E., & Nathwani, B. (1992). “Toward Normative Expert Systems: The Pathfinder Project.” Methods of Information in Medicine.

-

Pearl, J. (2018). “Theoretical Impediments to Machine Learning with Seven Sparks from the Causal Revolution.” arXiv.

-

Pearl, J. (2018). “A Personal Journey into Bayesian Networks.” UCLA Cognitive Systems Laboratory.

-

Pearl, J. (2023). “Judea Pearl, AI, and Causality: What Role Do Statisticians Play?” Amstat News.

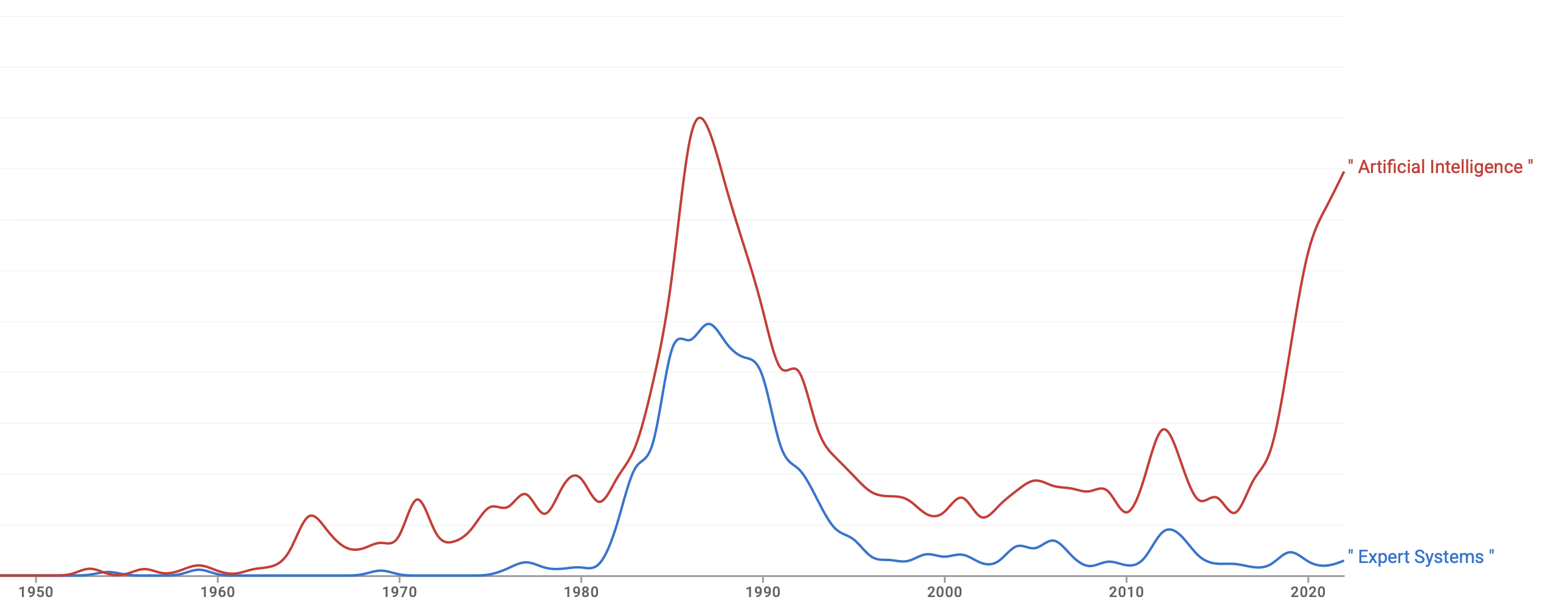

Mentions of 'expert systems' and 'artificial intelligence' in books, 1950–2022. Google Ngram Viewer.

Mentions of 'expert systems' and 'artificial intelligence' in books, 1950–2022. Google Ngram Viewer.

Here is the opening from a session called “The Dark Ages of AI,” at the world’s leading AI conference.

“In spite of all the commercial hustle and bustle around AI these days, there’s a mood that I’m sure many of you are familiar with of deep unease among AI researchers who have been around more than the last four years or so. This unease is due to the worry that perhaps expectations about AI are too high, and that this will eventually result in disaster.”

The year was 1984, at the height of the expert systems boom, when companies were standing up AI groups staffed by people who had read one book and attended a one-day tutorial. Drew McDermott asked the room to imagine the worst case. In five years time, imagine if the big strategic bets went nowhere, the startups all failed, and everybody hurriedly changed the names of their research projects to something else.

Eerily, the future unfolded almost exactly like that, though it took three years, not five. Expert systems had a structural problem the boom years had papered over. They were only as good as the rules you could extract from experts, and experts struggled to articulate what they actually knew. Systems were brittle outside their narrow domains, and the more rules you added, the harder they became to maintain. XCON, the system that had saved DEC $25 million a year, now required 59 technical staff to maintain it and still couldn’t keep pace with the ever-changing product line.

When cheaper hardware arrived, the economics collapsed. Sun workstations undercut LISP machines on price and Symbolics went bankrupt. Government programmes wound down, funding dried up, and companies across the industry quietly shut their AI groups. The word itself became professionally toxic. Usama Fayyad, finishing his PhD in AI in 1991, later recalled that no company would hire anyone who worked in the field.

What looked like a collapse was closer to a dispersal. The researchers scattered into adjacent fields, took their ideas with them, and kept working under names that didn’t attract attention or scepticism. The foundations of everything we now call AI were laid during a period when nobody wanted to fund it. In 2002, Brooks noted: “There’s this stupid myth that AI has failed, but AI is all around you all the time.”

Further Reading

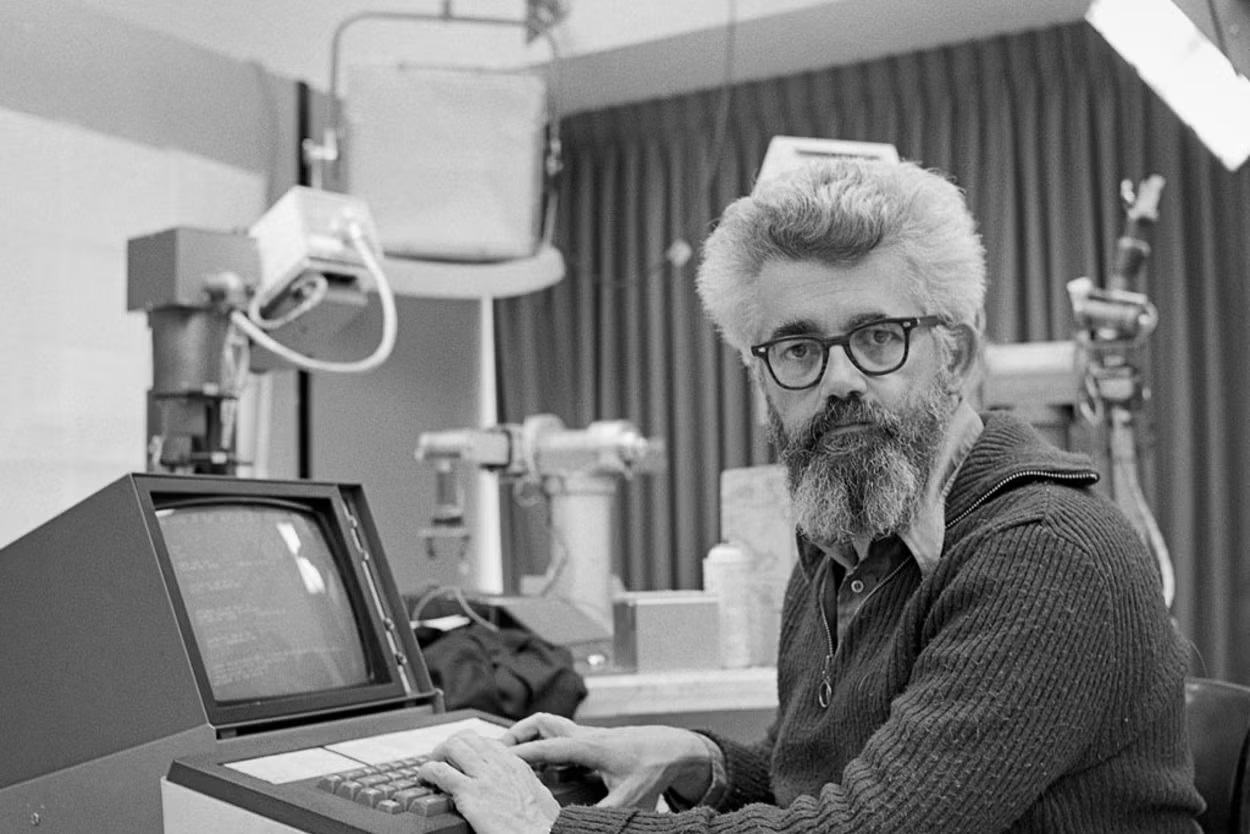

Expert systems console, Wikimedia Commons

Expert systems console, Wikimedia Commons

“Knowledge is the only instrument of production that is not subject to diminishing returns.” — J.M. Clark, 1923

Your best salesperson just resigned. Twenty years of knowing which people matter, who to speak to when, and how decisions actually get made. None of that knowledge is in the CRM, so when they leave, it goes with them.

Every organisation carries knowledge it can’t locate. It lives in the people who’ve been there longest, embedded in the culture and inherited processes. It isn’t written down because no one can tell you exactly what it is.

In 1965, Edward Feigenbaum, a computer scientist at Stanford, thought intelligence required knowledge. Within a decade, leading researchers were publishing findings using a system he designed, without thinking twice about the AI behind it.

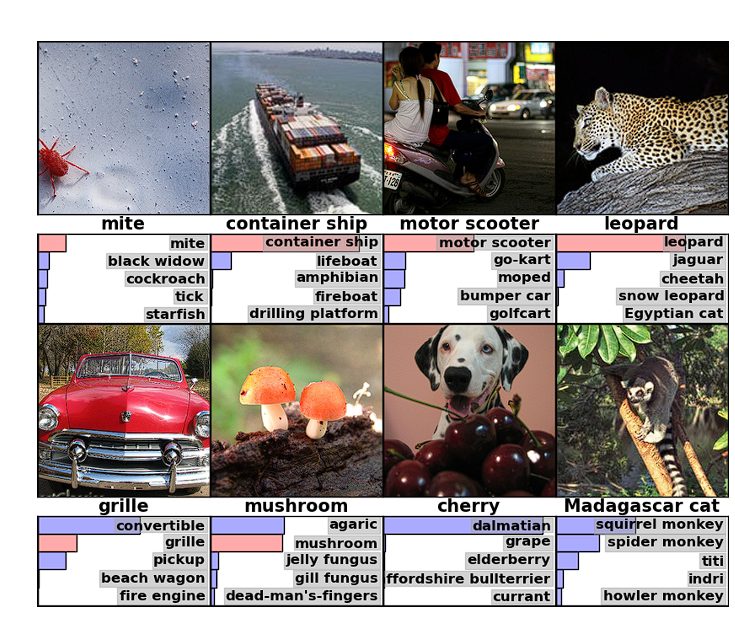

ImageNet test images

ImageNet test images

“That moment was pretty symbolic to the world of AI because three fundamental elements of modern AI converged for the first time.” — Fei-Fei Li, 2024

In 2012, a graduate student trained a neural network in his bedroom on two gaming GPUs. It beat every major AI lab in the world.

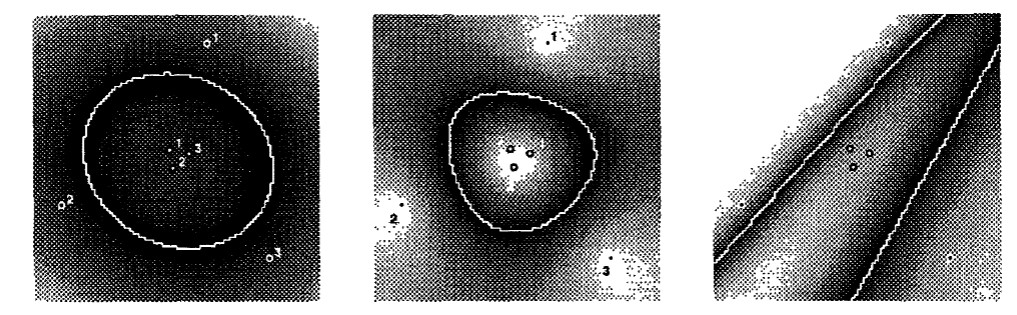

Original results from 1992 paper

Original results from 1992 paper

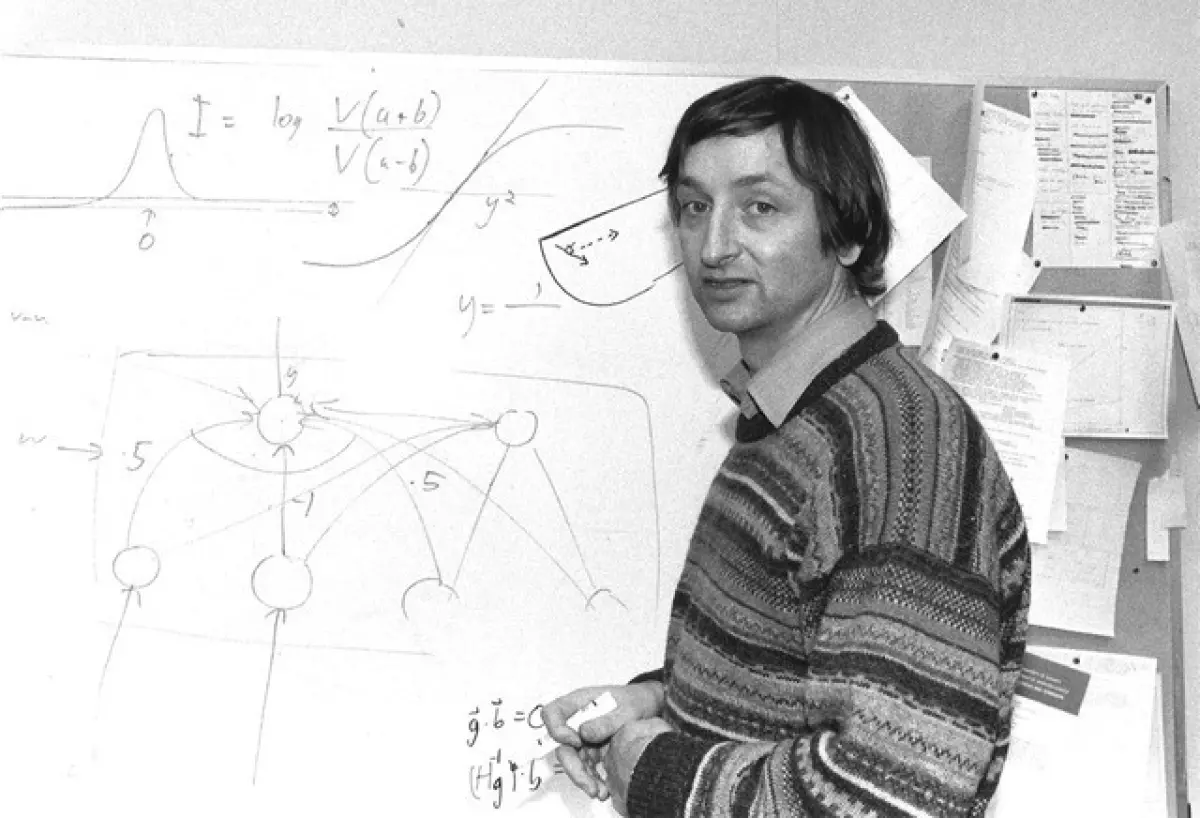

“Nothing is more practical than a good theory.” — Vladimir Vapnik

Vladimir Vapnik arrived at Bell Labs from Moscow in the early 1990s already in his 50s. He brought three decades of statistical learning theory the Western world had never seen. From 1961 to 1990, he had worked on one question. Under what conditions can you guarantee a learning algorithm generalises from training data? Mathematics that the Cold War had kept invisible.

Image: Ted Eytan, CC BY-SA 2.0, via Wikimedia Commons

Image: Ted Eytan, CC BY-SA 2.0, via Wikimedia Commons

“Artificial intelligence must be based on real human intelligence, which consists largely of applying old situations—and our narratives of them—to new situations.” — Roger Schank

Intelligence requires memory. You cannot expect machines to learn without remembering what worked. Everyone building enterprise AI is grappling with the same problem. How do we give AI systems persistent memory without the bloat problem?

The Null Stern hotel

The Null Stern hotel

“When one encounters a new situation one selects from memory a structure called a Frame. This is a remembered framework to be adapted to fit reality by changing details as necessary.” - Marvin Minsky, 1974

Building enterprise AI means teaching an LLM the messy reality of your business. You need to explain standard contracts but also the edge cases. You need to describe typical customers and the exceptions that break the pattern. You need to capture default processes and how they vary by region. In 1974, Marvin Minsky wrote an essay about exactly this problem. His ideas shaped knowledge representation and drove the expert systems boom of the 1980s.

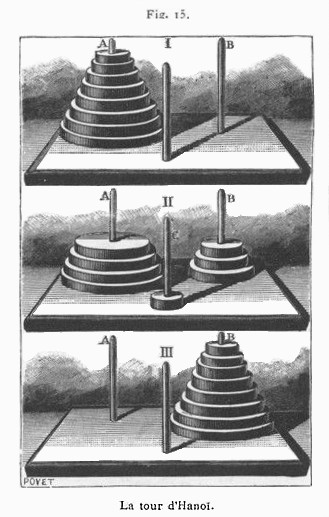

The Towers of Hanoi illustrated in La Nature

The Towers of Hanoi illustrated in La Nature

“Physical symbol systems are capable of intelligent action, and search is the essence of heuristic problem solving.” — Allen Newell & Herbert Simon, 1976

Problem solving involves considering different options, breaking things down, searching for a solution. Playing chess you explore options, consider possible moves, what your opponent may do in reaction, weighing up options along the way. It quickly becomes apparent that you can’t look at all options, so you focus attention and search intelligently. You rely on patterns and tactics picked up along the way.

Alongside knowledge representation, replicating intelligent search became the focus of early AI efforts. When we left Simon and Newell after Logic Theorist, they had recognized the core problem: you cannot search everything, so you must search intelligently. Inspired by George Polya’s work on problem solving, they built the General Problem Solver, demonstrating two important innovations.

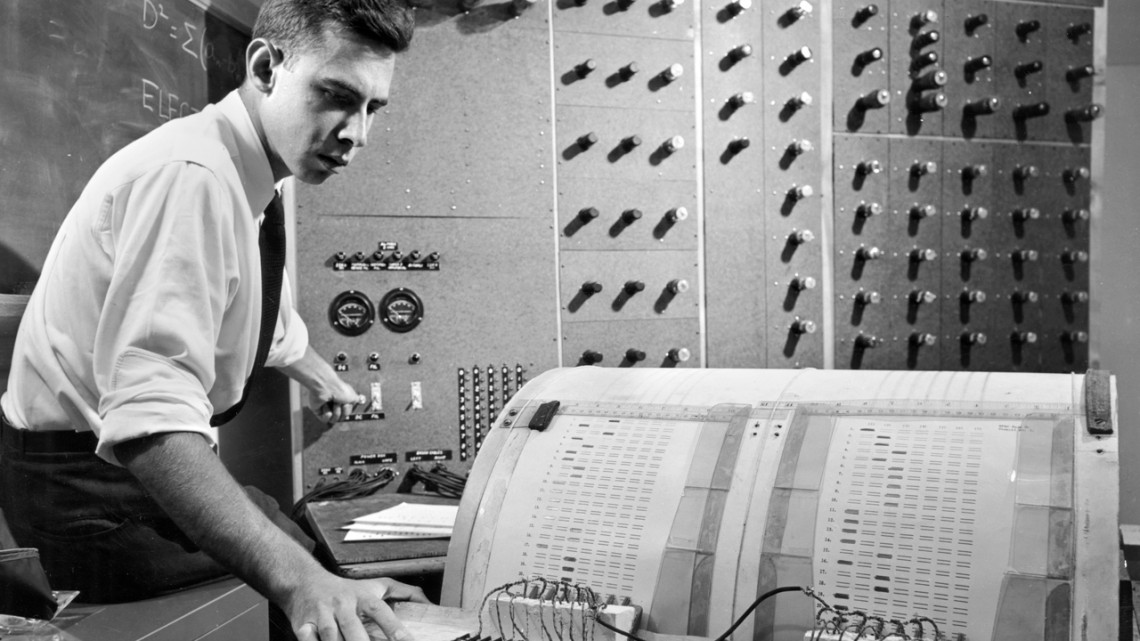

John McCarthy

John McCarthy

“The central problem of artificial intelligence involves how to express the knowledge about the world that is necessary for intelligent behavior.” — John McCarthy

Arguably no one has had such a long lasting impact on AI as John McCarthy. He shaped the field in ways few others did and his contributions cast a long shadow for decades. He gave the field its name, posed central questions and introduced the programming language that made exploring them possible. His key insight was to consider how knowledge should be represented.

Image: Canadian Institute for Advanced Research / Associated Press

Image: Canadian Institute for Advanced Research / Associated Press

“Give me another six months and I’ll prove to you that it works.” — Geoffrey Hinton

As we saw in the Perceptron post, by the end of 1960s, funding for neural network research dried up and most researchers moved on to other promising approaches. Most, but not all. A small group of researchers believed.

Rosenblatt with his Mark I Perceptron. Cornell University

Rosenblatt with his Mark I Perceptron. Cornell University

“The first machine which is capable of having an original idea.” — Frank Rosenblatt, 1958

The history of AI is a history of ideas and failure. This is true from the very beginning. Where Simon and Newell demonstrated intelligence by solving problems, others believed learning was the key. An intelligent machine should adapt from experience, improve with practice, and learn from mistakes.

Herbert Simon Richard Rappaport, CC BY 3.0, via Wikimedia Commons

Herbert Simon Richard Rappaport, CC BY 3.0, via Wikimedia Commons

“Machines will be capable, within twenty years, of doing any work a man can do.” — Herbert Simon, 1965

January 1956. Herbert Simon’s living room in Pittsburgh. His wife, three children, and several graduate students stand in a circle, each holding a 3x5 index card carrying instructions. Each person is a component of a program that doesn’t yet exist on any machine. Simon gives a signal. They begin passing messages, following the rules on their cards, executing the program by hand. They are humans simulating a computer designed to simulate human thinking. Simon would later recall, “Here was nature imitating art imitating nature.” If the program they are running works, it will prove that machines can think.

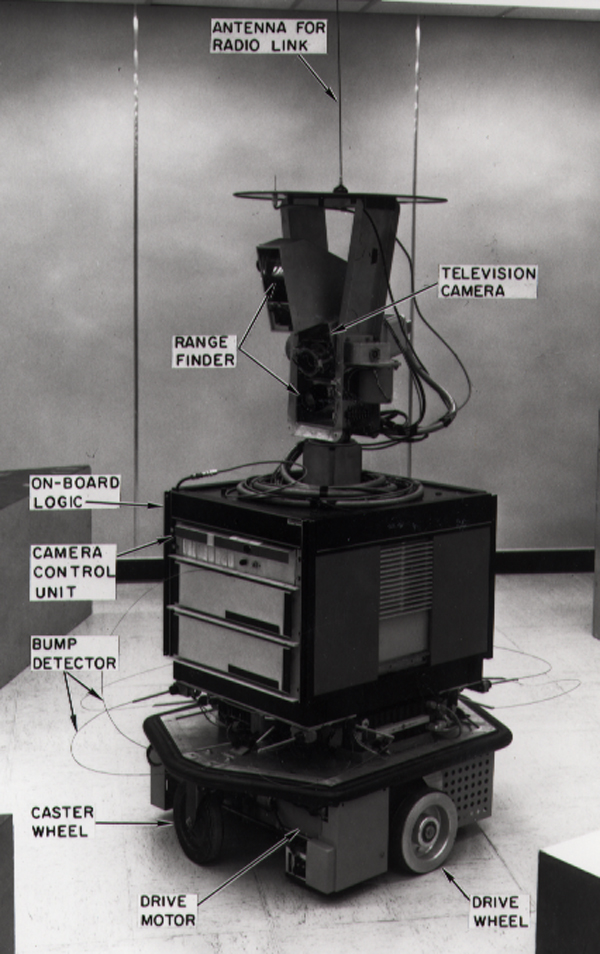

Shakey, SRI International, CC BY-SA 3.0, via Wikimedia Commons

Shakey, SRI International, CC BY-SA 3.0, via Wikimedia Commons

The history of AI is a history of ideas and failure.

Ideas come from anywhere intelligence is evident. The field has borrowed from logic, neuroscience, evolutionary biology, cognitive psychology, even the behaviour of ants. Problem solving is how we recognise intelligence. So these ideas get tested by building systems to tackle problems that require intelligence. If a system handles a hard problem, it demonstrates something that looks like thinking. But once a system becomes useful, usefulness is what matters. Whether we still call it intelligent becomes irrelevant.